The Problem With Feature-Based EM Platform Comparisons

Most electronic monitoring system evaluations start with a feature checklist. GPS tracking — check. Geofencing — check. Alerts — check. The problem is that every vendor on the market checks the same boxes. What procurement teams rarely evaluate is how those features behave under operational stress: 3,000 simultaneous subjects, a night shift with four operators, a connectivity dead zone in a rural district. These are the conditions where platform architecture actually matters, and where most systems quietly fall apart.

Scalability Is an Architecture Decision, Not a Sales Claim

We have seen pilot deployments succeed with 200 subjects, only to collapse when expanded to 2,000. The reason is almost never a single bottleneck — it is the accumulation of design choices that were acceptable at small scale but catastrophic at national scale. Message queues that cannot handle burst traffic during shift changes. Database queries that degrade linearly with subject count. Alert engines that generate so many false positives — often exceeding 60% of total alerts — that operators stop trusting the system entirely. A platform built for institutional deployment must demonstrate horizontal scalability with published benchmarks, not just claim it in a slide deck.

Operational Continuity Under Real Conditions

Electronic monitoring is a 24/7 obligation. There is no maintenance window at 2 AM when a high-risk subject is under house arrest. The platform must handle component failures gracefully — database failover, network partition recovery, degraded-mode operation when connectivity drops. Ask any vendor what happens when their primary database goes offline during peak hours. The answer reveals more about their architecture than any feature comparison ever will.

Device-Agnostic vs. Device-Locked Platforms

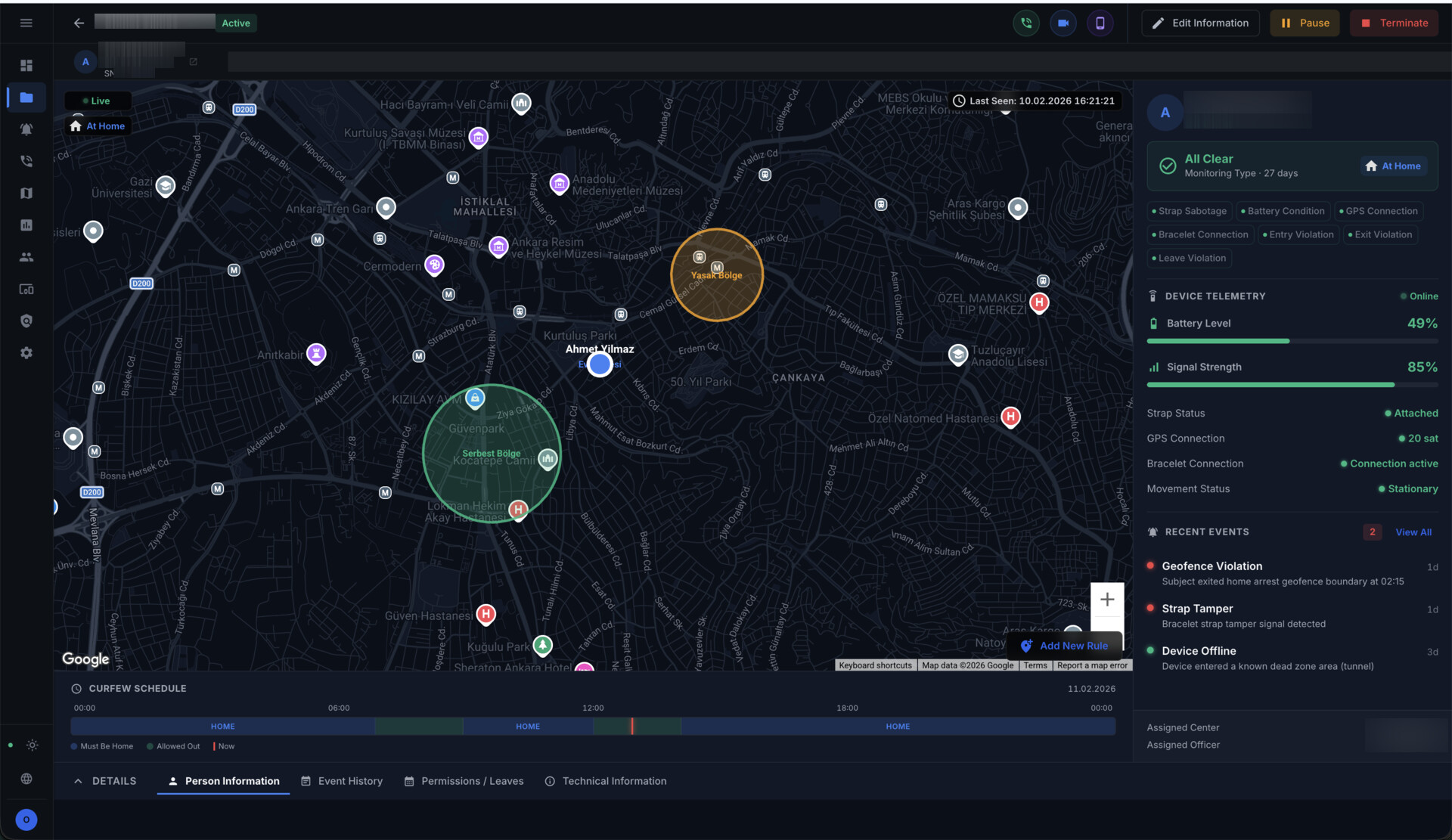

Some platforms are tightly coupled to a single hardware vendor's devices. This creates procurement risk, limits negotiating leverage, and makes technology transitions painful. An institutional-grade electronic monitoring platform should support multiple device types through standardized integration protocols — GPS ankle monitors, home beacon units, one-piece trackers, and smartphone-based monitoring — all managed from the same command center. The operational benefit is clear: mixed-mode programs where high-risk subjects wear dedicated devices while lower-risk populations use mobile monitoring, without requiring separate systems or training.

Total Cost of Ownership Beyond Licensing

The license fee is typically 15-20% of the total cost of operating an EM program over five years. Training, integration, device logistics, operator staffing, and system maintenance account for the rest. Platforms that reduce per-subject operational cost — through automation of routine tasks, intelligent alert filtering, and streamlined enrollment workflows — deliver measurably better ROI than those competing purely on license price. One corrections department we worked with reduced their average alert investigation time from 12 minutes to under 3 minutes after switching to a platform with contextual alert prioritization.

Evaluation Framework for Decision Makers

Rather than comparing feature lists, we recommend evaluating EM platforms against five operational criteria: time-to-deploy for a new subject (enrollment to active monitoring), mean time to respond to a genuine violation, false positive rate under production load, system availability over a 12-month period, and per-subject cost at target scale. These metrics cut through marketing language and reveal whether a platform can actually support an institutional monitoring program at the scale you need.